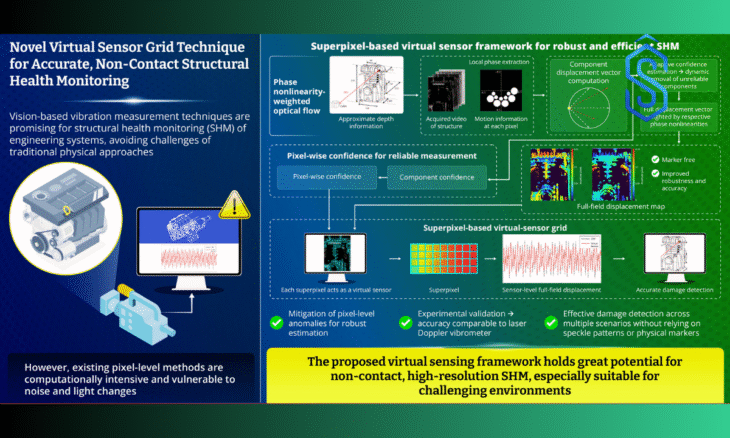

Researchers at Chonnam National University have developed a novel virtual sensor framework that significantly enhances the robustness and accuracy of vision-based structural health monitoring, while reducing reliance on physical sensors and surface modifications.

The new approach replaces traditional pixel-level sensing with superpixel-based virtual sensors, enabling stable and reliable vibration measurement even in complex environments.

Vision-based structural health monitoring has emerged as a cost-effective, non-contact alternative to conventional vibration-based assessment methods.

These techniques allow full-field vibration measurement directly from video data, eliminating the need for physical sensors that are often expensive, difficult to install, and limited in spatial coverage.

However, most existing vision-based approaches depend on pixel-level data, which is highly sensitive to noise, lighting variations, and image distortion, leading to instability and reduced reliability.

Also Read: Vyome Holdings Acquires MIT Spinout Oculo Health, Launches AI Psychiatrist Business Unit

Virtual Sensor Framework: Robust, Marker-Free Infrastructure Monitoring

Structural health monitoring plays a critical role in ensuring the safety and performance of engineering systems across sectors such as aerospace, civil infrastructure, and industrial machinery.

Traditional monitoring methods typically rely on contact-type sensors to detect structural damage by analyzing changes in vibration characteristics. While effective, these methods face challenges including high costs, low spatial resolution, and restricted measurement zones.

To overcome these limitations, the research team led by Professor Gyuhae Park from the Department of Mechanical Engineering at Chonnam National University has proposed a superpixel-based virtual sensor framework designed for marker-free, full-field vibration measurement.

Instead of treating each pixel as an individual sensor, the framework groups neighboring pixels with similar vibrational and structural behavior into superpixels, which then function as virtual sensors. This creates an adaptive virtual sensor grid that aligns naturally with the structure being monitored.

“Our approach utilizes superpixels as virtual sensors for motion estimation, enabling robust and accurate full-field vibration measurement without physical markers or contact sensors,” explained Professor Park.

The study was first made available online on September 30, 2025, and was subsequently published in Volume 240 of Mechanical Systems and Signal Processing on November 01, 2025.

Virtual Sensor Framework: Operating Through Three-Stage Process

The proposed virtual sensor framework operates through a three-stage process. In the first stage, pixel-level motion is estimated from video sequences using the phase nonlinearity-weighted optical flow (PNOF) algorithm, previously developed by the research team.

This method extracts motion information from phase data and evaluates the reliability of displacement estimates in different directions, discarding unreliable components affected by phase nonlinearity.

In the second stage, the virtual sensor framework calculates the overall confidence of full displacement at each pixel, introducing a built-in reliability assessment that distinguishes this method from existing vision-based vibration measurement techniques.

In the third stage, the confidence data and displacement maps are combined to segment pixels into superpixels, forming a virtual sensor grid.

Also Read: Prosper AI Secures USD 5M to Solve Healthcare’s USD 450B Administrative Crisis

Depth information is also incorporated to improve alignment between the virtual sensors and the physical structure. Displacement data is then calculated at the superpixel level to support structural damage detection.

Experimental validation conducted on an air compressor system demonstrated that the superpixel-based virtual sensor framework delivers accuracy comparable to that of a laser Doppler vibrometer, while eliminating the need for physical markers or contact sensors.

Although individual pixels exhibited variability, the superpixel-based virtual sensors effectively reduced noise-related inconsistencies.

According to Professor Park, vibration-guided superpixel segmentation improves both the robustness and interpretability of structural diagnostics in challenging environments.

The framework enables low-cost, accessible, and scalable full-field structural monitoring using standard cameras, supporting applications across infrastructure monitoring, aerospace systems, mechanical equipment diagnostics, smart cities, robotics, and digital twin technologies.

This development marks a significant advancement in vision-based structural health monitoring and may support wider adoption of non-contact, camera-based diagnostic systems.